Master Advanced Prompt Engineering for Claude AI | Optimize LLM Outputs

Advanced prompt engineering for Claude AI defines a set of techniques that reshape how an LLM interprets instructions, manages context, and produces reliable outputs; by intentionally structuring prompts you change the model’s reasoning pathway and the format of results to improve accuracy, fidelity, and safety. This guide explains what advanced prompt engineering means in practice for Claude AI, why it matters for LLM optimization, and how practitioners can apply methods like chain-of-thought prompting, few-shot prompting, role prompts, and tool-enhanced prompts to raise output quality. Many teams struggle to translate conceptual prompting tactics into repeatable production flows that balance latency, cost, and governance; this article tackles that gap with decision rules, templates, and integration checklists. You will get concrete templates and a decision matrix mapping techniques to tasks, a safety checklist that maps prompt-level and system-level guardrails to mitigation steps, and pragmatic enterprise integration patterns that include API integration and observability considerations. The next sections dive into core techniques, safety protocols aligned with AI safety best practices, step-by-step integration patterns for Claude AI, and current research trends such as retrieval-augmented prompting and self-consistency. Throughout, the content targets Claude prompt engineering, LLM prompt optimization, and advanced AI prompting so you can adopt methods immediately and measure improvements across production metrics.

How does advanced prompt engineering maximize Claude AI outputs?

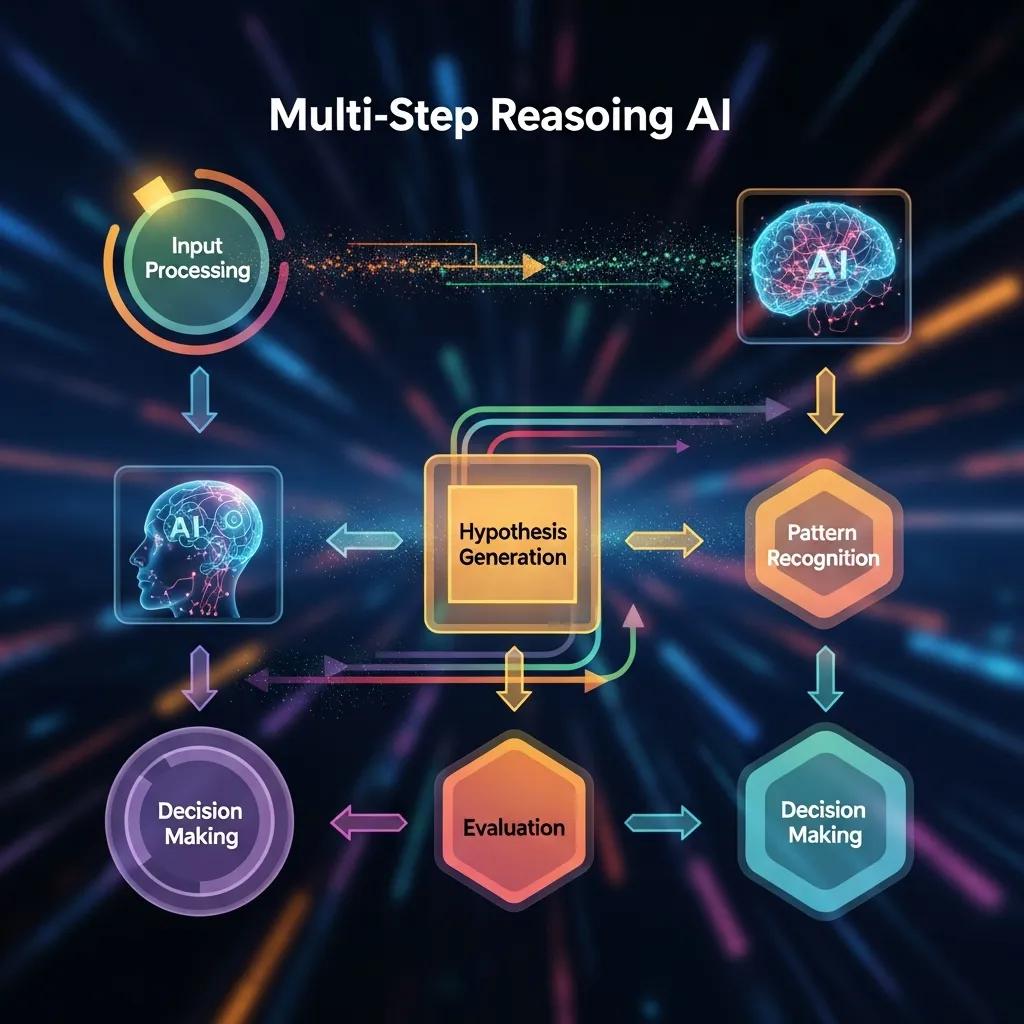

Advanced prompt engineering is the deliberate design of inputs to influence Claude AI’s internal reasoning and output form; by encoding structure, examples, and tool calls into prompts, you steer the model toward more accurate, consistent, and useful responses. The mechanism works by shaping the context window and activating latent behaviors—such as multi-step reasoning—so that the model’s probabilistic decoding aligns with task requirements, improving LLM optimization and downstream reliability. The result is measurable gains in accuracy, reduced need for post-processing, and clearer audit trails for governance; practitioners should apply a decision matrix mapping techniques to tasks when selecting an approach. Below is a practical comparison of core techniques to help you choose the right pattern for a given job.

This table compares the main prompting techniques, their best-fit tasks, and concise implementation notes to aid selection.

These techniques form the core of an “Advanced Prompt Engineering” hub that teams can use to design experiments and scale successful patterns across projects. Next, we look at concrete templates and short pseudo-prompts for core techniques that you can adapt immediately.

Core techniques for Claude prompting: chain-of-thought, few-shot, role prompts, and tool-enhanced prompts

Chain-of-thought prompting asks the model to articulate intermediate steps so multi-step problems become explicit and verifiable. Use a template that requests step-by-step reasoning followed by a concise final answer to capture both process and outcome. Few-shot prompting supplies curated examples that demonstrate the desired mapping from input to output; choose exemplars that cover edge cases and varied formats so Claude AI generalizes better. Role prompts set a persona or task role in the system message to bias tone and domain knowledge—this is effective for customer-facing automation or domain-specialist tasks. Tool-enhanced prompts integrate external calls (search, database, or function/tool invocations) and should include validation steps and post-call checks to ensure integrity. Performance trade-offs include increased latency and compute for chain-of-thought and tool calls, while few-shot prompting raises context-window usage; balance these against the need for accuracy and auditability.

- Example chain-of-thought pseudo-template: “List each reasoning step, then the final recommendation.”

- Example few-shot pseudo-template: “Example 1: [input] → [output]. Example 2: [input] → [output]. Now apply to new input.”

- Example role prompt pseudo-template: “You are an expert data analyst. Provide concise explanations and show assumptions.”

- Example tool-enhanced pseudo-template: “Call retrieval tool for facts, then synthesize results and cite the source.”

These templates help you trade off latency, cost, and reliability while optimizing outputs for production.

When to deploy each technique to optimize task performance in Claude AI

Select prompting techniques with a decision matrix mapping techniques to tasks: multi-step reasoning typically benefits from chain-of-thought prompting, classification with scarce examples fits few-shot prompting, user-facing personalization uses role prompts, and IO-bound tasks rely on tool-enhanced prompts. For example, a diagnostic workflow with intermediate calculations should use chain-of-thought plus a verification stage; an intent classifier for varied phrasing should use few-shot exemplars covering synonyms and edge cases. Consider trade-offs: chain-of-thought increases token usage (higher cost and latency), few-shot consumes context but reduces engineering overhead, role prompts are low-cost and effective for tone, and tool-enhanced prompts add engineering complexity but enable external truth sources. Use this short rule-of-thumb list to guide selection.

- 1. Use chain-of-thought for tasks requiring transparent multi-step reasoning and auditability.

- 2. Use few-shot prompting for structured classification or transformation with limited labeled examples.

- 3. Use role prompts when you need consistent persona, tone, or domain framing in outputs.

- 4. Use tool-enhanced prompts when external data or deterministic computations are required.

Apply the decision matrix mapping techniques to tasks and run small A/B experiments to validate improvements; this empirical approach reduces risk and clarifies the best pattern for your workload.

What safety protocols govern Claude AI prompt design?

Yes — safety protocols and guardrails should be an integral part of prompt design for Claude AI, combining prompt-level constraints with system-level checks to reduce misuse and harmful outputs. System-level guardrails enforce policy and model behavior at runtime, while prompt-level design guides (clarifying instructions, constrained output formats, and refusal templates) reduce the chance of unsafe outputs at the source; together they form layered mitigation. Operationalizing these protocols requires testing (red-team style), monitoring for risky signals, and escalation paths that include human review. Anthropic’s positioning and UVPs include powerful AI assistance and commitment to AI safety and research, and these commitments should inform governance frameworks and prompt-level standards.

Before the mitigation table below, note that teams should also use tooling for timely updates & semantic entity tracking (Google Alerts) and observability via Google Search Console to monitor entity recognition and surface changes in public behavior. The table maps common protocols to scope and practical mitigations.

Use these protocols to create a layered safety posture that reduces exposure while preserving utility. Next, practical prompt-level rules and monitoring signals help execute these controls in production.

Built-in guardrails, safety checks, and prompt design guidelines

System-level vs prompt-level guardrails differ in scope but should be complementary: system-level filters and policy layers enforce enterprise policy across all calls, while prompt-level design ensures safe framing and constrained outputs for each interaction. Prompt-level rules include explicit constraints on output format, direct instructions to refuse unsafe requests, and the use of closed schemas for structured outputs. Example safe prompts instruct Claude AI to “return JSON with keys X, Y, Z” and include a refusal clause for hazardous topics. Testing should include red-team style prompt permutations and a validation corpus to detect regressions. Establish internal links to product features and API integration guides as part of onboarding documentation so engineers apply guardrails consistently across implementations.

- Prompt design rules to adopt:Always include explicit output format constraints.Embed refusal language for disallowed categories.Use exemplar-safe prompts in few-shot templates to demonstrate acceptable alternatives.

These steps reduce ambiguity and improve the model’s alignment with safety objectives.

Managing risky or sensitive prompts and mitigation strategies

Detect risky prompts through monitoring signals such as high refusal rates, unusual token patterns, or increased use of sensitive keywords; log these incidents and surface them to a human-in-the-loop workflow for rapid triage. Fallback strategies include scripted refusal outputs, safe-response templates that offer alternatives, and escalation to domain experts when the model signals uncertainty. Maintain a catalog of monitoring signals and escalation steps to ensure consistent handling and to inform prompt revisions. Regular content updates and a cadence for red-team testing will reduce drift and surface new failure modes for remediation.

- 1. Detection heuristics: track anomalies in response length, refusal triggers, and user re-queries.

- 2. Fallback templates: provide neutral, safety-first responses and offer referrals to human support.

- 3. Escalation workflow: log events, notify reviewers, and maintain an audit trail for compliance.

Operationalizing these mitigations protects users and preserves trust while maintaining utility.

How to integrate Claude AI into enterprise workflows?

Integrating Claude AI into enterprise workflows means designing reliable request/response lifecycles, choosing appropriate API integration patterns, enforcing security controls, and implementing observability to maintain performance and governance. At a high level, integration spans synchronous request/response flows for chat interfaces, asynchronous job processing for batch tasks, and tool-call patterns for retrieval or external computation; each pattern has distinct latency, error-handling, and retry semantics. Security considerations include strong authentication, encryption in transit, least-privilege access to data sources, and logging for auditability; these are core to safe deployment and compliance. For product teams, the Website URL: https://claude.ai and API integration guides should be referenced as the canonical source for developer onboarding and enterprise documentation.

Below is a concise comparison of integration patterns, security considerations, and typical use-cases to guide architecture choices.

After implementation, monitor with observability tools and consider Google Search Console for tracking entity recognition and public index changes that may affect content expectations.

API integration patterns, platform compatibility, and security considerations

Concrete API patterns include synchronous calls for conversational UX, asynchronous jobs for heavy extraction tasks, and tool-call orchestration where Claude AI triggers retrieval or executes functions. Each pattern requires specific retry and error-handling strategies; for example, idempotency keys for retries in async workflows and exponential backoff for rate-limited endpoints. Authentication should follow least-privilege principles and use scoped tokens; encrypt data in transit and at rest, and redact or tokenize sensitive fields before sending prompts. Observability should include request tracing, latency percentiles, and anomaly alerts to detect degradation. Teams should also monitor semantic entity recognition using timely updates & semantic entity tracking (Google Alerts) to keep integrations aligned with changing public information.

- 1. Define call pattern: sync vs async vs tool-call.

- 2. Enforce auth and encryption: scoped tokens and TLS.

- 3. Implement observability: tracing, metrics, and alerting.

These steps enable secure, maintainable Claude integration platforms at scale.

Use-case examples: customer support automation, data extraction, and decision support

Customer support automation commonly combines intent classification (few-shot prompting) with role prompts to produce consistent, branded responses and an escalation pipeline for complex tickets. Data extraction pipelines use structured output schemas and post-processing validators to convert unstructured text into tabular records; tool-enhanced prompting with retrieval reduces hallucination by grounding outputs in known facts. Decision support workflows rely on chain-of-thought prompting to surface reasoning steps and include human review for final judgments, ensuring compliance and accountability. For each use-case, validate outputs with automated metrics and human sampling, and instrument logging for traceability.

- 1. Customer support automation: intent classification → templated response → human escalation.

- 2. Data extraction: structured schema → validation rules → record insertion.

- 3. Decision support: chain-of-thought analysis → human review → final decision logging.

These examples demonstrate how Claude integration platforms can support practical enterprise needs such as customer support automation, data extraction, and decision support.

What are current research trends in prompt engineering for Claude AI?

Current research trends focus on hybrid prompting methods and rigorous evaluation frameworks that blend retrieval-augmented prompting with tool-augmented pipelines, and on calibration techniques like self-consistency to improve robustness. Evaluation methods increasingly combine automated metrics with human-in-the-loop evaluation to assess factuality, coherence, and safety; reproducible small experiments help teams iterate rapidly. Practitioners should adopt schema.org (structured data) and other structured schemas to constrain outputs for downstream systems and to improve semantic indexing. To stay current, subscribe to AI-focused publications for latest research and trends and maintain timely updates & semantic entity tracking via Google Alerts.

The following list highlights promising techniques and recommended evaluation steps.

- 1. Emerging techniques to explore:

- 1. retrieval-augmented prompting for grounding responses,

- 2. self-consistency to reduce variance in reasoning,

- 3. automated prompt evaluation pipelines combining BLEU/ROUGE-like metrics with targeted factuality checks.

Emerging techniques and evaluation methods for LLM prompts

Retrieval-augmented prompting integrates external knowledge sources at query time to reduce hallucination and improve factual grounding; pair it with tool-augmented pipelines for verification. Self-consistency techniques run multiple reasoning chains and aggregate answers to reduce stochastic errors, which is particularly useful for complex reasoning tasks. Evaluation should combine automated metrics (precision/recall, structured-field accuracy) with human annotation for safety and relevance; small reproducible experiments—A/B tests across prompt variants—help quantify improvements. Recommended benchmarks include task-specific suites for classification and extraction, and bespoke evaluation sets that reflect production distributions.

Research further explores how interleaving retrieval with chain-of-thought reasoning can significantly enhance performance for knowledge-intensive, multi-step questions.

Interleaving Retrieval with Chain-of-Thought for Multi-Step QA

Prompting-based large language models (LLMs) are surprisingly powerful at generating natural language reasoning steps or Chains-of-Thoughts (CoT) for multi-step question answering (QA). They struggle, however, when the necessary knowledge is either unavailable to the LLM or not up-to-date within its parameters. While using the question to retrieve relevant text from an external knowledge source helps LLMs, we observe that this one-step retrieve-and-read approach is insufficient for multi-step QA. Here,what to retrievedepends onwhat has already been derived, which in turn may depend onwhat was previously retrieved. To address this, we propose IRCoT, a new approach for multi-step QA that interleaves retrieval with steps (sentences) in a CoT, guiding the retrieval with CoT and in turn using retrieved results to improve CoT.

Interleaving retrieval with chain-of-thought reasoning for knowledge-intensive multi-step questions, H Trivedi, 2023

Indeed, Retrieval-Augmented Generation (RAG) has proven effective in mitigating a key limitation of generative AI: its propensity to hallucinate.

Reducing LLM Hallucination with Retrieval-Augmented Generation

A current limitation of Generative AI (GenAI) is its propensity to hallucinate. While Large Language Models (LLM) have taken the world by storm, without eliminating or at least reducing hallucination, real-world GenAI systems will likely continue to face challenges in user adoption. In the process of deploying an enterprise application that produces workflows from natural language requirements, we devised a system leveraging Retrieval-Augmented Generation (RAG) to improve the quality of the structured output that represents such workflows. Thanks to our implementation of RAG, our proposed system significantly reduces hallucination and allows the generalization of our LLM to out-of-domain settings.

Reducing hallucination in structured outputs via Retrieval-Augmented Generation, O Ayala, 2024

Practical evaluation steps:

- 1. Define task-specific metrics and an annotator rubric.

- 2. Run controlled prompt A/B tests and measure variance.

- 3. Log failures and feed them into prompt versioning and red-team cycles.

These methods create a feedback loop that improves both prompt design and model performance.

Incorporating safety governance and regulatory updates into prompt design

Operationalize governance through prompt versioning, audit logs, scheduled review cycles, and compliance monitoring to ensure that prompt changes are tracked and reversible. Maintain a cadence for red-team testing and update workflows when regulations or entity tracking signals change; integrate timely updates & semantic entity tracking and Google Alerts into your monitoring stack to detect shifts in public expectations or regulatory guidance. Regularly update training and documentation, and enforce review and approval gates for prompt changes in production.

- 1. Version prompts and maintain audit logs for all changes.

- 2. Schedule periodic red-team testing and regulatory reviews.

- 3. Integrate semantic entity tracking and alerts into governance dashboards.

These governance practices help teams keep prompt lifecycles compliant, auditable, and aligned with evolving AI safety measures and integration strategies.