AI Implementation Strategy: How to Successfully Deploy AI in Your Organization

A practical AI implementation strategy translates high-level ambition into measurable outcomes by combining clear objectives, data readiness, governance, and the right talent and infrastructure. This guide shows how to define an AI strategy that aligns with business goals, prepare teams and systems for deployment, manage ethical and regulatory risk, and move pilots into enterprise-scale systems. Readers will gain an actionable roadmap for prioritizing use cases, assessing AI readiness, establishing data governance, assembling an AI-proficient team, and measuring ROI so investments deliver strategic value. Throughout the article we use targeted frameworks, checklists, and tables to make planning and execution repeatable, and we explain how to avoid the common organizational traps that derail AI initiatives. By following the steps below—strategy, readiness, team, governance, and scaling—you’ll be better equipped to deploy AI solutions that accelerate digital transformation and produce measurable business impact.

How to Define a Robust AI Strategy Aligned with Business Goals and Digital Transformation

A robust AI strategy starts by defining specific business outcomes and the mechanisms by which AI will produce them, such as improved decision-making, workflow automation, or new product capabilities. The reason this alignment matters is that AI projects succeed when objectives are measurable, prioritized by impact and feasibility, and tied to executive sponsorship; the result is an actionable roadmap with clear success metrics. Below we outline how to set objectives, prioritize use cases, and structure a phased roadmap that supports digital transformation while maintaining governance and measurement. This strategy foundation leads naturally into a prioritized roadmap that stages assessment, pilots, and scaling with concrete gates and KPIs.

When translating business goals into AI objectives, use templates that link each objective to a metric, owner, and timeline. A simple three-step conversion method works well:

- identify the business outcome

- map potential AI capabilities that enable it

- define KPIs and success gates

Prioritization should balance value, data availability, technical feasibility, and compliance risk so high-value, low-risk pilots move first. The following list summarizes prioritization criteria to use when choosing initial AI projects.

- Value-to-customer: Projects that directly improve revenue, retention, or experience.

- Data availability: Use cases where structured, machine-readable data already exists.

- Feasibility: Solutions that require modest engineering effort and reuse existing models.

- Compliance risk: Projects with manageable privacy and regulatory exposure.

These criteria make trade-offs explicit and prepare the organization for realistic pilots; the next step is constructing a phased roadmap with timelines and gates.

The roadmap should define assessment, pilot, and scale phases with explicit milestones, owners, and expected outcomes to avoid common scope creep. Use the EAV table below to compare key pillars of the deployment roadmap and recommended actions for each pillar.

This table compares the critical pillars of an AI deployment roadmap and actionable steps to operationalize each pillar.

| Pillar | Key Attribute | Recommended Action |

|---|---|---|

| AI Readiness | Leadership & Sponsorship | Assign executive sponsor and measurable business KPIs |

| Data Governance | Quality & Compliance | Inventory data, ensure machine-readable formats, apply policies |

| Team | Skills & Roles | Define roles (ML engineers, data engineers, product owners) and plan augmentation |

| Ethics & Governance | Responsible AI controls | Implement audit trails, bias checks, and policy review boards |

| Monitoring | Model Performance | Set metrics, CI/CD for models, and automated alerts |

This comparison helps leadership decide where to focus initial investment and create realistic timelines that support digital transformation.

Informityx offers AI Development, Software Development, and Mobile App Development services. Operational model: strengthening client’s in-house teams with skilled professionals, ensuring scalability, cost reduction, improved productivity, and process efficiency, while maintaining full transparency and operational control. Primary service aligned to topic: AI Development (creating customized AI solutions to enhance strategic decision-making, automate complex workflows, and accelerate digital transformation). Unique Value Propositions (UVPs): customized AI solutions for strategic impact and workflow automation

What are the core AI objectives and vision to guide deployment?

Core AI objectives should be specific, measurable, and tied to business outcomes such as revenue growth, cost reduction, experience improvement, or risk mitigation. The mechanism by which objectives drive deployment is to convert strategic goals into discrete AI use cases, each with defined owners, KPIs, and success criteria; this creates clarity and accountability. Examples of objective templates include reducing average handling time by X%, increasing lead conversion by Y percentage points, or automating N manual steps per week. Use a prioritization matrix to score prospective use cases on impact and feasibility, then select a balanced portfolio of quick wins and strategic bets.

Translate objectives into pilot charters that limit scope, define data sources, and set timelines for proof-of-value; this prevents runaway projects and provides early signals for scaling. When pilots validate value and technical feasibility, the next step is preparing the organizational and technical infrastructure needed to scale successfully.

How can you build an actionable AI roadmap that supports digital transformation?

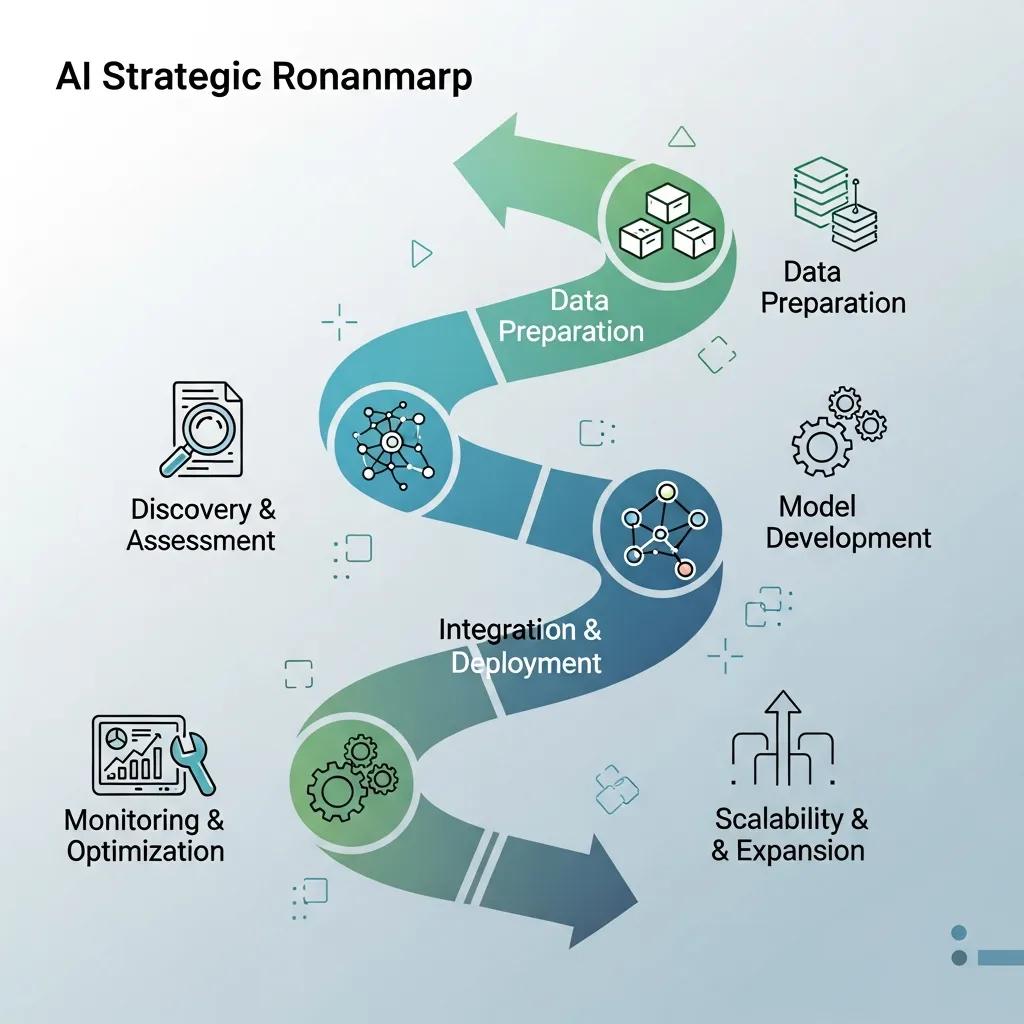

An actionable AI roadmap defines phases—assess, pilot, scale—with gating criteria and resource allocation, and maps dependencies across data, infra, and people. In the assessment phase, inventory data and systems and run an AI readiness check; in the pilot phase, run MVP experiments with clear KPIs; in scale, harden models, automate retraining, and integrate with production systems. Typical timing guidance: assessments take 4–8 weeks, pilots 8–16 weeks, and initial scaling 3–9 months depending on scope and regulatory complexity.

A sample phased roadmap might include specific milestones: data ingestion pipelines built, model prototype validated with A/B testing, compliance audit performed, and CI/CD for models operationalized. Use the pillars table above to map responsibilities and ensure each phase has clear acceptance criteria so pilot results can drive funding and organizational change.

How to Prepare Your Organization for AI: Readiness, Data Governance, and Infrastructure

Preparing an organization for AI requires assessing leadership alignment, data maturity, skills, and technical infrastructure so that models have reliable access to quality data and operational support. The mechanism behind readiness is ensuring that people, processes, and platforms are synchronized to support iterative model development and safe production deployment; the benefit is fewer stalled pilots and faster time-to-value. Below are practical checklists and governance steps that reduce implementation friction and prepare systems for production-level AI.

AI systems must be able to access data appropriately. This includes ensuring that data is stored in a structured, machine-readable format and that it complies with relevant privacy regulations and security best practices, especially if sensitive data is involved. “PAA example question: What are the challenges of AI implementation?” Use this readiness checklist to map remediation actions.

The checklist helps teams convert gaps into concrete tasks and is followed by remediation steps to close the most critical issues.

- Leadership alignment: Confirm executive sponsor and business KPIs are defined.

- Data inventory: Catalog datasets, formats, and owners; flag sensitive fields.

- Technology stack: Validate cloud, MLOps, and pipeline tools are available and secure.

- Skills and roles: Identify training needs and augmentation gaps.

Closing these gaps enables a smoother pilot phase and reduces the risk of scaling failures; the next subsection explains how to assess readiness in detail.

What is AI readiness and how do you assess organizational preparedness?

AI readiness covers leadership alignment, data availability and quality, technical platforms, talent, and change-management processes that enable deployment at scale. A practical assessment uses a scoring rubric across dimensions—leadership, data, technology, skills, and process—with remediation actions tied to each low-scoring area. For example, low data maturity triggers prioritized data engineering sprints; weak sponsorship requires executive workshops to align KPIs. Suggested cluster: ‘AI Readiness Assessment – Evaluating Organizational Preparedness’

Further emphasizing the importance of a thorough evaluation, research highlights key factors for assessing an organization’s preparedness for AI adoption.

Organizational AI Readiness Assessment Factors

An informed decision regarding an organization’s readiness increases the probability of successful AI adoption and is important to successfully leverage AI’s business value. Thus, companies need to assess whether their assets, capabilities, and commitment are ready for the individual AI adoption purpose. The paper presents five categories of AI readiness factors and their illustrative actionable indicators, providing a sound set of organizational AI readiness factors and corresponding indicators for AI readiness assessments. … , AI Comes—An Interview Study of Organizational AI Readiness Factors: J. Jöhnk et al.: Ready or Not, AI Comes: An Interview Study of Organizational AI Readiness …, J Jöhnk, 2021

An assessment matrix clarifies priorities and creates a roadmap for remediation; once remediation work begins, teams can plan a pilot with fewer blockers and clearer success criteria.

What data governance and infrastructure are essential for AI deployment?

Effective data governance defines stewardship, access controls, lineage, and quality gates while aligning with applicable laws and frameworks; practical elements include data catalogs, masking for sensitive fields, and role-based access controls. At an infrastructure level, cloud platforms, data lakes, MLOps pipelines, and secure APIs are typical components required to support repeatable model training and safe inference. Suggested cluster: ‘Data Governance for AI – Ensuring Quality and Compliance’ and note regulatory mentions in SERP: GDPR, CCPA, AI Act

Mapping governance controls to compliance regimes and implementing automated data quality checks reduces bias exposure and audit risk; this technical baseline also informs team composition and augmentation needs described next.

Building an AI-Proficient Team and Growth Through Team Augmentation

An AI-proficient team combines product ownership, data engineering, ML engineering, data science, and ML Ops to deliver end-to-end solutions; the mechanism is cross-functional collaboration where each role ensures its piece of the value chain functions reliably. The benefit is faster, higher-quality deployments that can be productionized and monitored. Below we list core roles, training approaches, and augmentation models that accelerate capability maturation.

Operational model focuses on strengthening client’s in-house teams with skilled professionals, ensuring scalability, cost reduction, improved productivity, and process efficiency, all while maintaining full transparency and operational control. UVP: provision of skilled professionals for team augmentation. Suggested cluster: ‘Building an AI-Proficient Team – Skills and Augmentation’

- Data Engineer: Builds pipelines, ensures data quality, and manages ETL processes.

- ML Engineer: Productionizes models, manages serving, and implements CI/CD for models.

- Data Scientist: Designs experiments, creates models, and validates performance.

- Product Owner: Defines business requirements, success metrics, and prioritization.

This role mix supports both pilot and scale phases and helps organizations decide when to hire permanently versus augment with external specialists described next.

What does an AI-proficient team look like?

An AI-proficient team is small, cross-functional, and outcome-driven, typically including a product owner, data engineer, ML engineer, data scientist, ML Ops engineer, and a data steward or compliance owner. Responsibilities are clear: product owners drive business alignment, engineers handle pipelines and productionization, and data scientists design models and evaluations; this structure minimizes handoffs and accelerates iteration. For pilot stages, a compact team of 4–6 people can validate value; for scale, invest in ML Ops and governance roles. Training pathways include role-based bootcamps, mentorship, and shadowing augmented staff to transfer knowledge.

Documenting role charters and onboarding processes ensures consistent performance as teams scale and supports eventual handover from external contributors.

How can external talent augmentation accelerate AI initiatives?

External talent augmentation speeds initiatives by adding experienced practitioners to fill immediate skills gaps, shorten ramp time, and provide specific expertise such as MLOps or cloud-native deployment. Typical engagement models include short-term contractors, dedicated augmentation teams, or project-based delivery; choose the model that balances speed, cost, and knowledge transfer. Measure augmentation impact with metrics like ramp time, deliverable quality, and knowledge-transfer completion. Operational model: strengthening client’s in-house teams with skilled professionals to ensure scalability and cost reduction.

Onboarding and governance for external teams should include documentation standards, code review practices, and defined knowledge-transfer milestones so augmentation accelerates capability rather than creating dependency.

Ethical AI, Governance, and Risk Management for Deployments

Ethical AI and governance frameworks ensure deployed systems are auditable, fair, and compliant; the mechanism is to bake audits, bias detection, and documentation into the ML lifecycle so models remain trustworthy as they evolve. The benefit is lower legal and reputational risk and greater user trust. Below we present governance models, mitigation techniques, and practical policy recommendations to operationalize responsible AI.

Suggested cluster: ‘Ethical AI and Responsible Deployment Frameworks’. Regulatory mentions in SERP: GDPR, CCPA, AI Act. “PAA example question: What governance frameworks guide Responsible AI deployment?”

Governance frameworks typically include a governance committee, data stewards, model reviewers, and an ethics officer responsible for policy enforcement and audit trails; the next list outlines a practical governance checklist.

- Establish a governance committee with defined review cycles and approvals.

- Implement bias detection tests and fairness audits during model validation.

- Maintain documented model lineage, decisions, and data provenance for audits.

These controls reduce the likelihood of compliance failures and provide evidence for regulators and stakeholders; detailed mitigation tactics follow.

What governance frameworks guide Responsible AI deployment?

Responsible AI governance commonly uses a multi-tier model with policy and review committees, technical guardrails (testing and monitoring), and operational roles like ethics officers and data stewards. Practical elements include documented approval flows, regular audits, and checklists for model release that ensure traceability and accountability. Recommended roles: ethics officer, data steward, model reviewer; create an approvals playbook and integrate review steps into sprint cadences. Suggested cluster: ‘Ethical AI and Responsible Deployment Frameworks’

To ensure comprehensive and ethical deployment, detailed guidelines and checklists are crucial for reporting and implementing AI models responsibly.

AI Model Reporting Guidelines & Ethical Implementation Checklist

We aimed to develop a comprehensive set of guidelines and a checklist for reporting studies that develop and utilize AI models in healthcare, covering all essential components of AI research regardless of the study design. The key elements essential for AI model reporting were identified and organized into structured sections, including study design, data preparation, model training and evaluation, ethical considerations, and clinical implementation.

Comprehensive reporting guidelines and checklist for studies developing and utilizing artificial intelligence models, SG Kwak, 2025

Embedding these governance steps into delivery processes ensures models cannot be released without appropriate checks, which supports scaling efforts described later.

How do you mitigate risk, bias, and compliance in AI projects?

Mitigation techniques include data audits for representativeness, fairness tests during validation, role-based access controls for sensitive features, and continuous monitoring for drift and performance degradation. Practical governance includes automated alerts for metric changes, a documented incident response plan, and periodic compliance reviews tied to GDPR, CCPA, or the AI Act where relevant. The challenge is fundamentally organizational rather than technical—successful AI adoption requires addressing leadership alignment, cultural readiness, and organized skill building.

Implementing these controls reduces regulatory exposure and maintains model performance over time through proactive remediation.

From Pilot to Enterprise Scale: Measuring ROI, Monitoring, and Scaling AI

Scaling AI requires metrics-driven decisions, production-grade monitoring, and an organizational model that supports continuous improvement; the mechanism is converting pilot learnings into repeatable processes for deployment, monitoring, and retraining. The benefit is sustainable ROI and fewer stalled projects as AI moves from experiment to embedded capability. Below we define ROI metrics, monitoring practices, and a production checklist for hardening models at scale.

Important market and adoption context to consider when planning scale: Up to 90 percent of AI models never escape the pilot phase. In 2024, approximately 22 percent of large organizations have deployed generative AI at scale. Nearly 80 percent of organizations have adopted at least one AI tool. The global AI market is projected to reach roughly $431 billion by the end of 2027. AI implementation requires ongoing monitoring and adjustment rather than one-time deployment. Suggested cluster: ‘Measuring AI ROI and Continuous Optimization’

Begin by defining KPIs that map directly to business outcomes and use the table below to translate business metrics into measurable AI KPIs.

This table maps business metrics to definitions and sample ways to measure them in production.

| Metric | Definition | How to measure / sample KPI |

|---|---|---|

| Cost savings | Reduction in operational expense | Compare baseline run-rate vs post-deployment cost per transaction |

| Latency | Time to respond to request | Median and 95th percentile response time in ms |

| Model accuracy | Predictive performance | Precision/recall or AUC on holdout and live traffic |

| Deployment frequency | Release cadence for models | Number of production model releases per quarter |

| Retraining time | Time to retrain and validate models | Mean time from data ingestion to validated retrained model |

Use these metrics to build dashboards and automated alerts that detect drift, performance drops, or cost anomalies; ongoing monitoring enables safe automation of retraining and rollbacks.

### How do you define ROI and success metrics for AI initiatives?

Define ROI by linking model outcomes to monetary or operational KPIs: e.g., revenue lift, cost per unit reduction, or time saved per employee multiplied by labor cost. Establish baselines with A/B tests or randomized control groups and measure attribution over sufficient duration to capture downstream effects. Sample KPIs include reduction in average handling time, increase in conversion rate, precision/recall improvements, and cost-per-transaction changes. SERP statistic: ‘Up to 90 percent of models never escape the pilot phase’—use rigorous baselines and gating criteria to avoid becoming a statistic.

Clear ROI definitions allow stakeholders to make funding decisions and to identify which pilots merit scaling.

### What steps turn a successful pilot into scalable enterprise AI?

Turning a pilot into enterprise AI requires a productionization checklist: harden data pipelines, implement CI/CD for models, automate monitoring and retraining, ensure governance and compliance, document processes, and run knowledge-transfer sessions. A practical 6-step checklist includes productionize the model, set up model monitoring and alerts, document model behavior and approvals, automate retraining pipelines, ensure governance controls are in place, and scale infrastructure with modular architectures. “AI is no longer just a forward-thinking idea—it’s a strategic necessity.” The challenge is fundamentally organizational rather than technical—successful AI adoption requires addressing leadership alignment, cultural readiness, and organized skill building.

Following these steps ensures pilots become sustainable, auditable, and beneficial parts of enterprise operations.

Informityx offers AI Development, Software Development, and Mobile App Development services. Operational model: strengthening client’s in-house teams with skilled professionals, ensuring scalability, cost reduction, improved productivity, and process efficiency, while maintaining full transparency and operational control. Primary service aligned to topic: AI Development (creating customized AI solutions to enhance strategic decision-making, automate complex workflows, and accelerate digital transformation). Unique Value Propositions (UVPs): customized AI solutions for strategic impact and workflow automation; acceleration of digital transformation; provision of skilled professionals for team augmentation; ensuring scalability and cost efficiency; commitment to full transparency and operational control.